Solving Indoor AR Limitations: Osaka University's Breakthrough

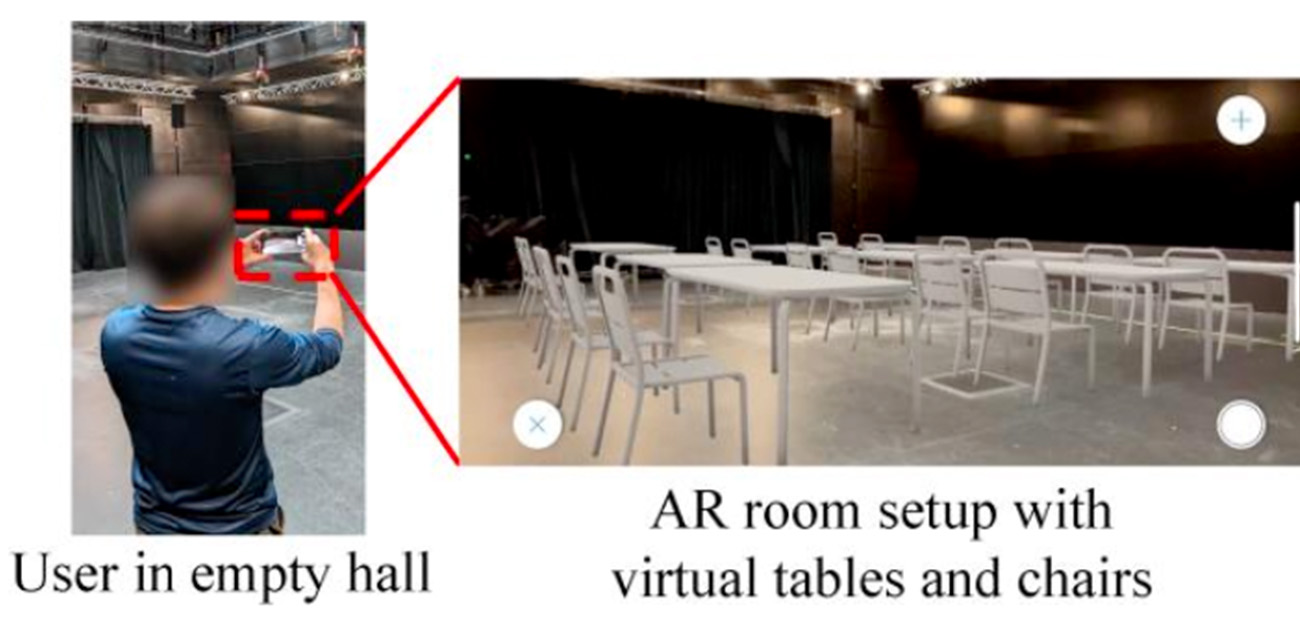

Augmented reality (AR) applications, although popular for their ability to overlay visual elements over smartphone camera images, struggle to perform well indoors due to poor GPS signals. Researchers from Osaka University have conducted extensive studies to pinpoint the problems and propose solutions to enhance AR technology. **Key Challenges**: The study found that for AR to function effectively, a smartphone must accurately localize and track itself using visual sensors like cameras and LiDAR, as well as inertial measurement units (IMUs). However, these tools have limitations: visual landmarks are hard to detect in poor lighting, at extreme angles, or from far distances; LiDAR's performance can vary; and IMUs can accumulate errors over time, leading to virtual element 'drifts' that may cause motion sickness. **Research Findings**: Through 113 hours of experiments involving 316 patterns, researchers explored the failure modes of AR in various environments, like a virtual classroom setup. They discovered that a significant obstacle is the difficulty in finding consistent visual landmarks indoors. **Proposed Solutions**: To overcome these challenges, the team suggests using radio-frequency localization, such as ultra-wideband (UWB) sensing, which is less reliant on visual cues and unaffected by lighting or line-of-sight issues. UWB, used in technologies like Apple AirTags, offers more robust localization. These findings suggest that integrating radio-frequency-based localization with existing vision-based methods could vastly improve indoor AR applications.